Post-Purchase AI Visibility: What Happens After the Sale

Post-Purchase AI Visibility: What Happens After the Sale

Your customers are asking AI engines questions after they buy. Find out who's answering them and what it's costing your retention and renewals.

Haritha Kadapa

Q: What is post-purchase visibility on AI engines?

Post-purchase visibility on AI engines refers to how your brand appears or fails to appear in AI responses when existing customers return with questions after buying. Prompts like "how do I track my claim," "why isn't the app working," or "should I upgrade my plan" are typed into ChatGPT, Perplexity, Gemini, and Meta AI every day. Most brands have no idea what answers their customers are getting. If your content isn't structured for AI citation at that moment, a competitor's help centre, a Reddit thread, or a generic article fills the gap instead.

Q: Why does post-purchase AI visibility matter beyond traditional customer support?

Your help centre, your customer success team, and your support tickets only capture customers who came to you. AI engines are capturing the ones who didn't, that is, customers who typed a question into an AI model and got an answer from a competitor's troubleshooting page, a Reddit thread, or a generic how-to article that doesn't even reference your product correctly. As per Gravton's data, for one insurance company, three operational prompts: "how to track my claim," "app not working," and "how to add my parents" represented roughly 22,500 monthly units of LLM demand, bigger than several pre-purchase clusters in the same audit. We estimate this using continuous prompt sweeps across AI platforms and broad platform coverage. Volumes are then extrapolated using a Bayesian model with similarity-weighted aggregation to arrive at monthly demand estimates. None of it was visible in their analytics. That demand existed whether or not they were measuring it.

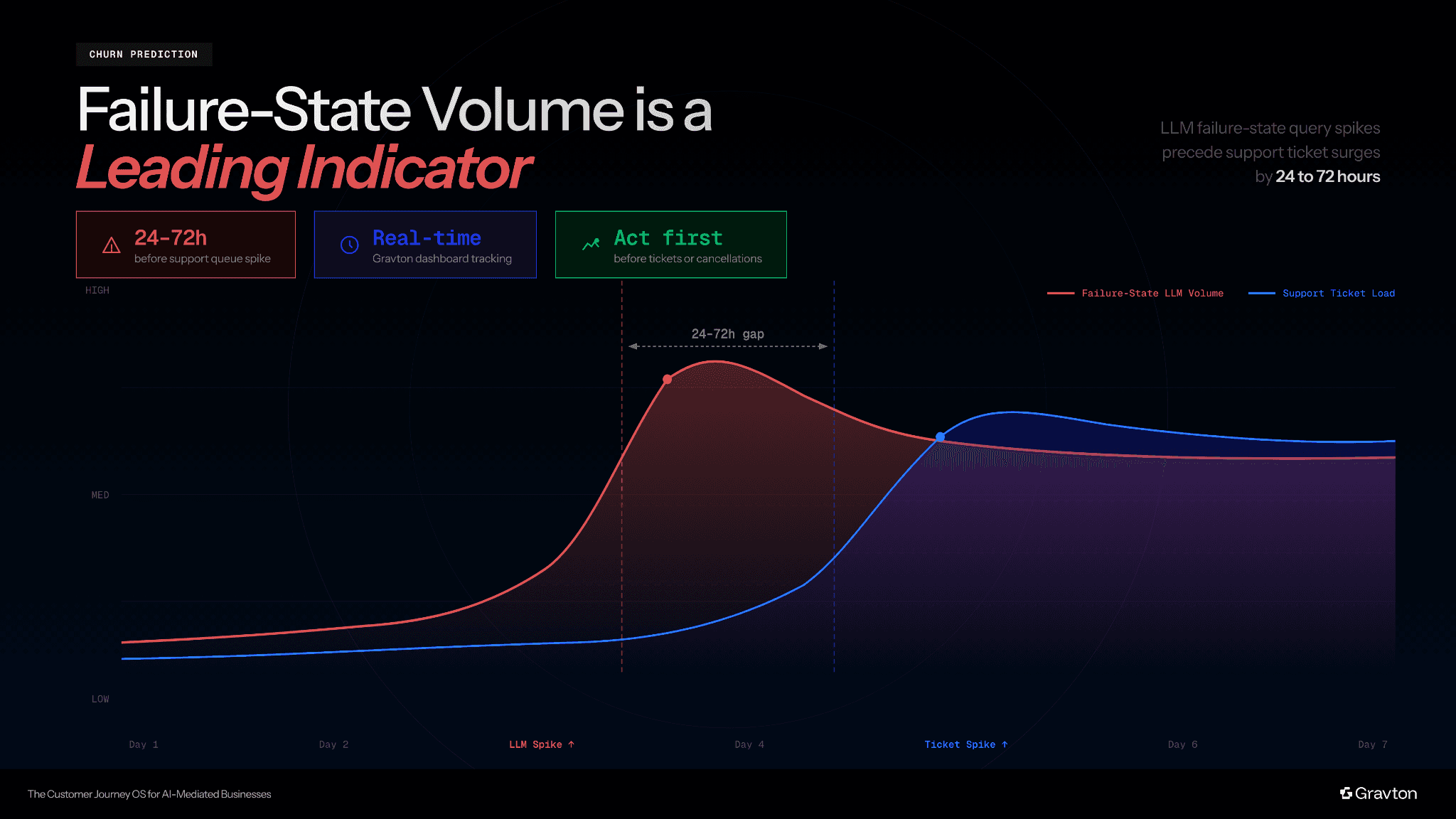

Q: Can post-purchase AI visibility predict churn before it happens?

Yes. Failure-State prompt volume is a leading indicator of support ticket load, typically by 24 to 72 hours. A spike in customers asking LLMs about errors, rejected transactions, or missing data often precedes the spike in your support queue. This gives your customer success team a window to act before those customers become tickets, escalations, or churned accounts.

|

Q: How does post-purchase AI visibility work differently for regulated industries like financial services and insurance?

In regulated industries, customers often don’t reach out to support when they face compliance questions or claims-related issues. They ask for an LLM instead. If your content isn’t cited in that moment, a complaint forum or a competitor’s help page fills the gap, shaping decisions in high-risk situations where accuracy and trust matter most.

Q: Does post-purchase AI visibility apply to B2B renewals?

Yes, directly. More than half of B2B buyers use AI tools to evaluate, onboard, and review their purchases. When a stakeholder asks an AI platform whether to renew, upgrade, or switch vendors, the AI response shapes that decision. If your brand is consistently cited with accurate onboarding outcomes, ROI evidence, and real-world use cases, it reinforces confidence at the renewal moment. If it isn't, or if a competitor's content fills the gap, you're losing renewal conversations you don't even know are happening.

|

Q: Can smaller brands benefit from tracking post-purchase AI visibility?

Smaller and mid-market brands are disproportionately exposed. A large brand has an ambient presence across the web that LLMs can draw from even without a structured post-purchase content strategy. A smaller brand doesn't. If your help centre content isn't structured for LLM citation with clear entity signals, definitive answers, and canonical URLs, then a generic article or a competitor's support page will fill the gap. A well-executed Adoption Health strategy can give a smaller brand significantly stronger LLM citation coverage than its size would otherwise warrant.

Q: What does Gravton actually measure, and how is it different from other AI visibility products?

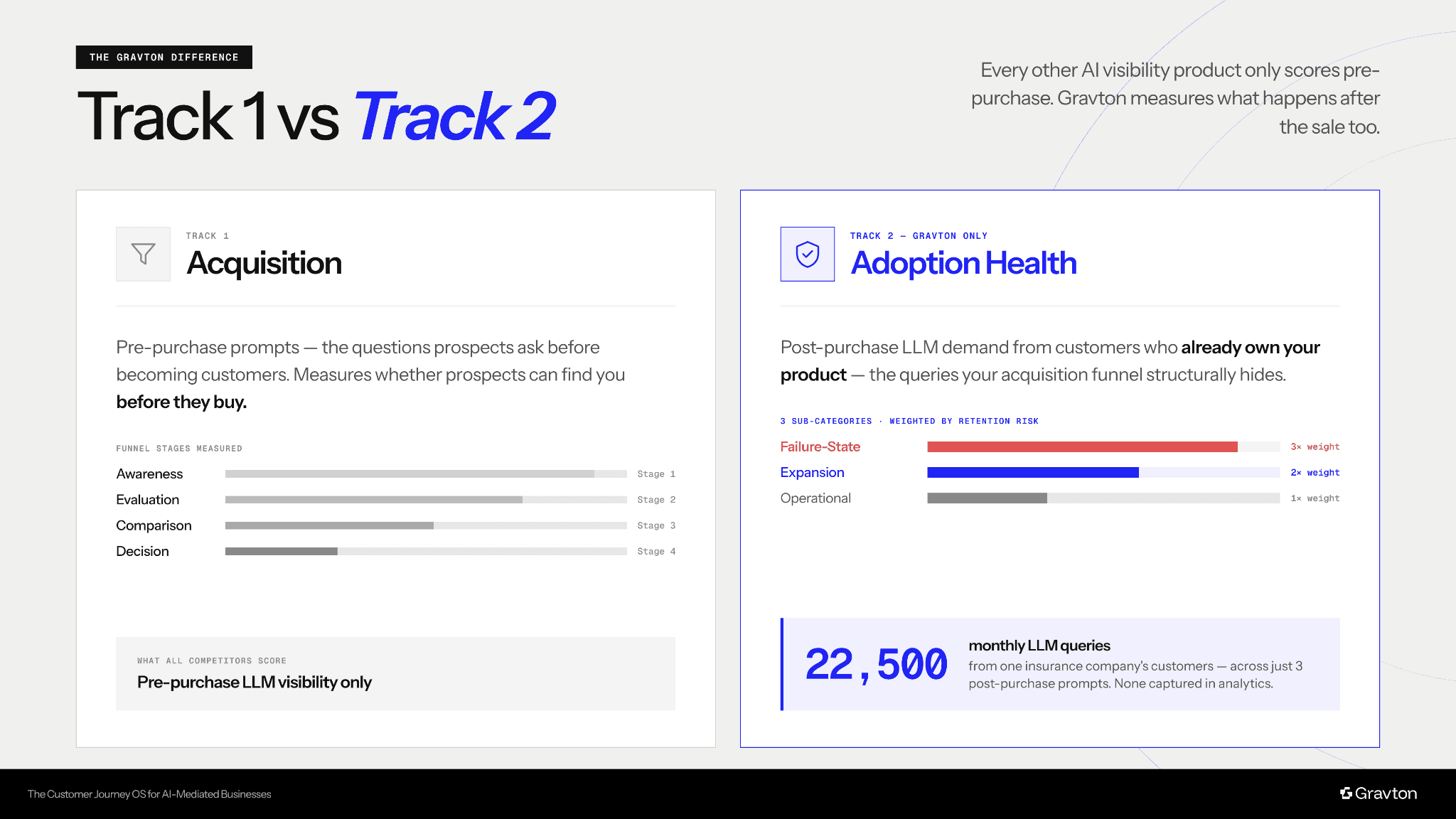

Every other AI visibility product only scores Acquisition (We call Track 1). These are pre-purchase prompts, the questions prospects ask on the way to becoming customers. They measure whether prospects can find you before they buy, with revenue at stake, scored across four funnel stages: Awareness, Evaluation, Comparison, and Decision.

Gravton structures, measures, and scores Adoption Health (Track 2), that is, the post-purchase LLM demand from customers who already own your product.

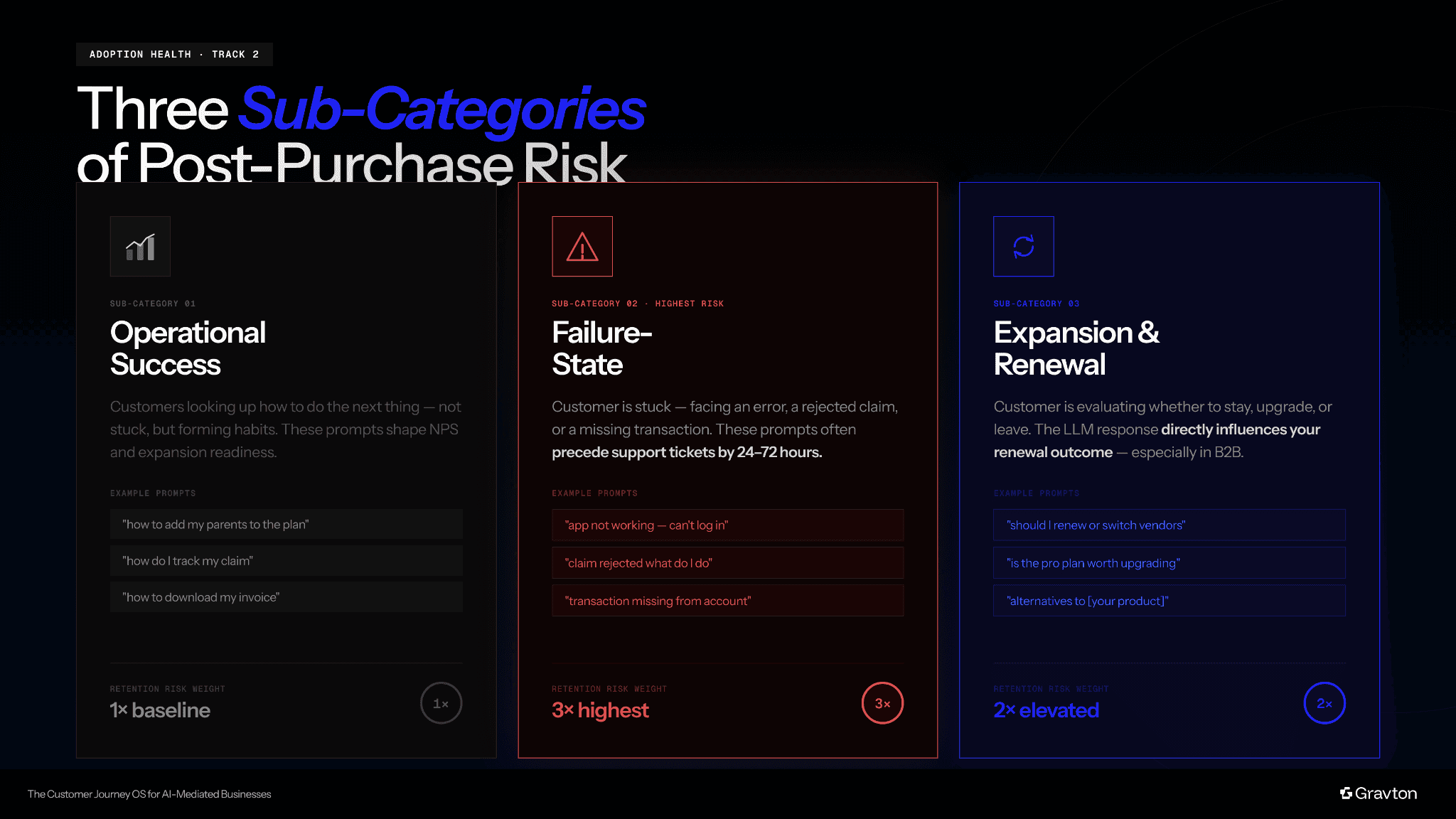

Track 2 splits into three categories.

Operational Success

Prompts are customers looking up how to do the next thing, not stuck, but forming habits. Whether your help centre or a competitor's content gets cited in that moment shapes NPS and expansion readiness.

Failure-State

Prompts are the highest-risk category. A customer is stuck, facing an error, a rejected claim, or a missing transaction. These prompts often precede support tickets by 24 to 72 hours, and if an LLM cites a competitor's workaround instead of your resolution content, that's a churn event in slow motion.

Expansion and Renewal

Prompts signal whether a customer is evaluating staying, upgrading, or leaving, and the LLM's response directly influences your renewal outcome.

Gravton scores Track 2 with a Retention Risk Score weighted by sub-category severity, prompt volume, and citation gaps.

Figure 1: Acquisition (Track 1) vs Adoption Health (Track 2), i.e., pre-purchase prompts vs post-purchase queries.

Figure 2: Adoption Health (Track 2) dashboard with three sub-categories: prompts related to (i) Operational success, (ii) Failure-state, (iii) Expansion & Renewal.

Q: What does the Gravton dashboard show, and what metrics should I care about?

The Adoption Health tab is designed so a head of CS or marketing leader can answer three questions in under 30 seconds: how large my customer-driven LLM query volume is, which prompts are most dangerous to retention, and what should I publish first to address them. It surfaces the total monthly volume across the three subcategories, with a card per category showing the top prompts, your current LLM citation strength, and the business outcome at stake. Failure-State prompts get the highest visual weight. The tab sits completely separate from the acquisition dashboard with its own visual system. There's no ambiguity about which surface you're looking at.

The metrics that matter most are the

Retention Risk Score

A weighted measure of how much customer-driven AI query demand you’re not capturing, prioritised by how critical the prompt is to retention. Higher scores indicate a greater risk of support load, poor customer experience, and potential churn.

First-party content citation rate

whether LLMs are citing your own help centre or someone else's when your customers are stuck

Subcategory distribution

What proportion of your customer LLM volume is Failure-State vs Expansion vs Operational

Failure-State volume trend

Week over week, which often spikes hours before your support queue reflects an underlying product issue.

Figure 3: Failure-state LLM volume vs support ticket load, i.e., AI query spikes acting as a leading indicator 24-72 hours before support surge.

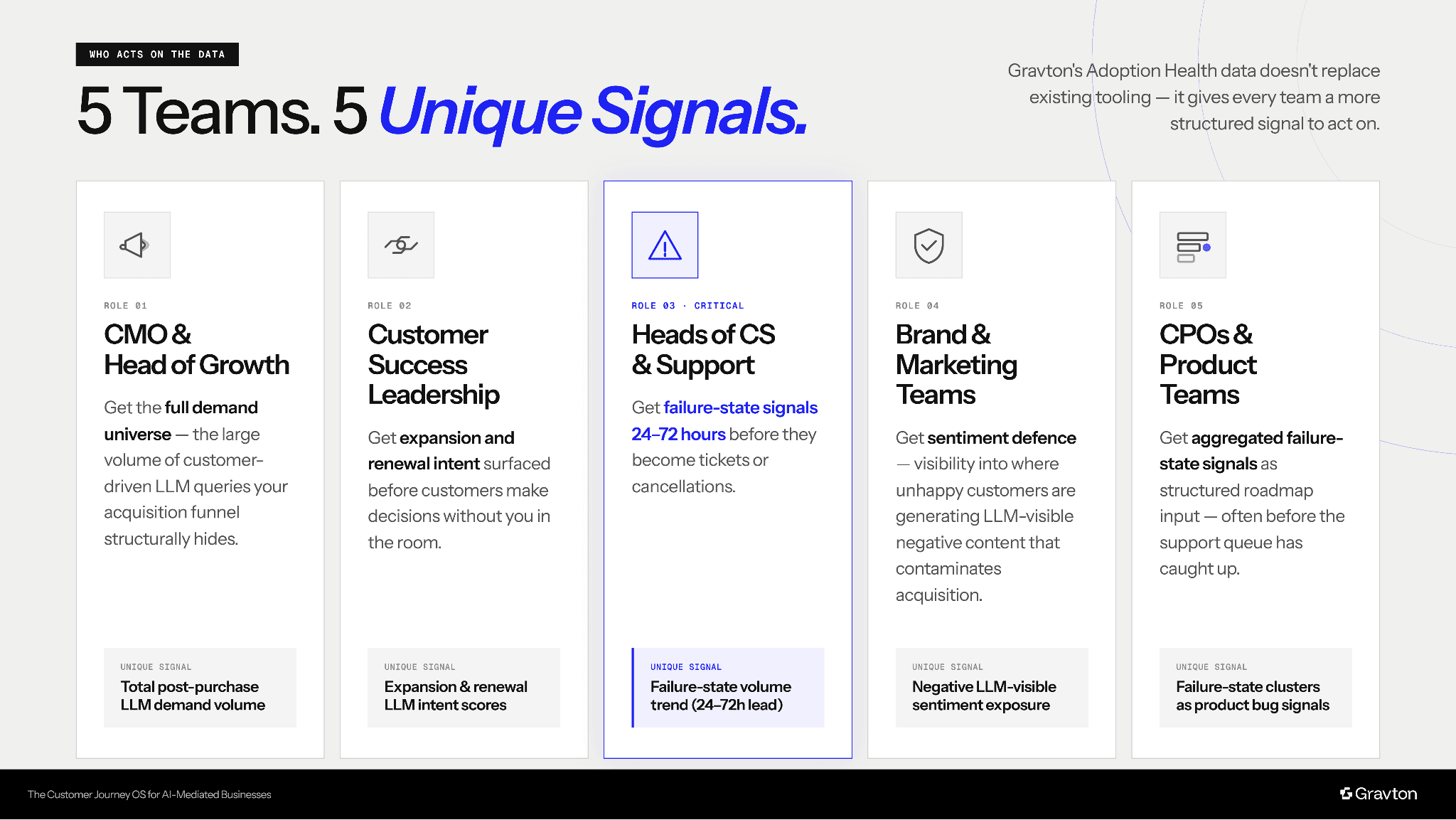

Q: Who inside my organisation acts on post-purchase AI visibility data?

Gravton's Adoption Health data has five distinct owners, each getting signals they can't get anywhere else.

CMOs and Heads of Growth

Get the full demand universe, that is, the large volume of customer-driven LLM queries that your acquisition funnel structurally hides.

Customer Success leadership

Gets expansion and renewal intent surfaced before customers make decisions without you in the room.

Heads of CS and Support

Get failure-state signals 24 to 72 hours before they become tickets or cancellations.

Brand and Marketing teams

Get sentiment defense. That is the visibility into where unhappy customers are generating LLM-visible negative content that contaminates your acquisition funnel.

CPOs and Product teams

Get aggregated failure-state signals as structured input to the roadmap, often before the support queue has caught up.

Gravton doesn't replace any of these teams' existing tooling. It gives all of them a more structured signal to act on.

Figure 4: 5 teams and 5 unique signals, i.e., how different functions act on post-purchase AI visibility data.

|

Free AI Visibility Audit

Limited Availability.

VISIBILITY & CONTENT STRATEGY